Why AI Cybersecurity Is Different from Cloud Security

The rise of Generative AI and cloud adoption has triggered a cybersecurity arms race. Organizations now face AI-driven attacks at scale: by 2026, 93% of companies expect daily AI-assisted assaults, even as 70% say AI tools help detect threats that once went unnoticed.

At the same time, cloud infrastructure is booming, and the cloud security market is projected to reach $121.04 billion by 2034, but misconfigurations remain a leading source of breaches. These trends make clear that securing AI systems is not the same as securing cloud servers. AI adds new layers of risk (maliciously poisoned data, stolen models, adversarial inputs, etc.) that traditional cloud tools simply weren’t designed to address.

In short, the AI era demands a new security mindset. This article explores why AI cybersecurity, protecting machine-learning models, training data, and AI pipelines, fundamentally differs from traditional cloud security. We’ll survey how cloud security works today, uncover AI’s unique attack surface, and explain why existing cloud controls fall short for AI workloads. We’ll also outline AI-specific threats (like data poisoning and model inversion) and propose how enterprises can build an AI-secure posture (with frameworks like NIST’s AI RMF, OWASP LLM Top 10, MITRE ATLAS, etc.). Finally, we show how Cygeniq’s platform uniquely secures AI environments, and conclude with a real-world cautionary example.

Understanding Traditional Cloud Security

Cloud security is about safeguarding infrastructure and data in cloud environments. It focuses on protecting compute, storage, and network layers using well-established controls. As one industry analyst notes, cloud security “revolves around safeguarding data, applications, and resources hosted in cloud environments”. In practice, core cloud security capabilities include:

- Firewalls & Network Segmentation: Virtual firewalls and network controls isolate cloud workloads and services from unauthorized access.

- Encryption: Strong encryption of data at rest and in transit is fundamental. It ensures data remains unreadable even if intercepted or exfiltrated.

- Identity and Access Management (IAM): Multi-factor authentication, role-based access, and single sign-on ensure only authorized users and services can reach cloud resources

- Endpoint and Workload Protection: Anti-malware and runtime security agents on cloud VMs and containers help prevent compromise and lateral movement.

- Continuous Monitoring & Logging: Cloud workloads are monitored 24/7, with logs and telemetry fed into SIEM or CSPM systems for threat detection.

- Incident Response Automation: Playbooks and automated response tools quickly isolate compromised workloads, revoke credentials, or spin up fresh resources when breaches occur.

These measures target the infrastructure and data layer in the cloud. They assume workloads are deterministic – static applications or VMs with predictable behavior. In this model, threats come from things like misconfigured servers, unpatched software, open ports, or stolen credentials. Cloud security tools (CSPM, CIEM, CNAPP, etc.) excel at hardening servers, securing IAM policies, detecting misconfiguration drift, and responding to network attacks.

Where Cloud Security Stops

However, cloud security has limits. It doesn’t defend the intelligence of a system, only the infrastructure. In particular:

- Static Focus: Traditional controls assume predictable workloads. AI models, by contrast, learn and evolve, so a static rule set may miss a clever AI-targeted exploit.

- No AI Privacy or Model Protection: Cloud controls don’t natively address model confidentiality. They won’t prevent an attacker from repeatedly querying a public AI endpoint to reverse-engineer the model (model extraction) or probing it to infer training data.

- Ignores Data Quality: Cloud security doesn’t validate whether training data is correct or uncompromised. A poisoner could slip malicious samples into the AI training set to subtly alter its behavior. Cloud tools won’t flag this because they don’t interpret dataset semantics.

- Alerts on Different Signals: A cloud SIEM might alert on unusual login times or data transfers, but not on an LLM generating biased outputs or an AI model showing concept drift. The signals of AI-specific attacks (e.g., rising error rates, weirdly generated content) require new detection logic.

In summary, traditional cloud security leaves a blind spot: it secures the shell (hardware, network, storage) but not the soul of AI applications. To protect AI, we must secure the models, data pipelines, and decision logic – realms where cloud tools rarely venture.

What Is AI Cybersecurity?

AI cybersecurity (also called AI security) refers to protecting AI and machine learning systems across their entire lifecycle — from data collection and model training to deployment and inference. In practice, it means securing:

- Models and Code: Protecting the trained model weights, architectures, and the code that runs them. AI models are intellectual property and business-critical assets, so their theft or corruption is disastrous.

- Training Data: Ensuring training datasets are accurate, untainted, and stored securely. If attackers poison the data, the AI may learn dangerous or biased behaviors.

- APIs and Interfaces: Securing model endpoints (APIs, prompts, UIs) so attackers cannot manipulate inputs to produce malicious outputs (e.g., prompt injection attacks).

- Outputs and Decisions: Monitoring AI outputs for anomalies or drift (unusual changes in predictions) that could signal an attack, error, or bias.

- Development Pipeline: Embedding security into the entire MLOps pipeline – code repos, continuous integration, deployment environments, and monitoring – so that AI systems are “secure by design”.

- Governance and Compliance: Applying policies, logging, and audits to AI workflows to meet regulatory and ethical standards (for example, the NIST AI Risk Management Framework or the upcoming EU AI Act).

In essence, AI cybersecurity adapts traditional CIA (confidentiality, integrity, availability) principles to the AI era. Confidentiality means guarding models and sensitive data (preventing model theft or data leaks). Integrity means assuring data/model lineage and defending against data/model poisoning so the AI’s outputs remain correct. Availability means keeping AI services online and responsive, even under attack or high load (e.g., against DoS or resource-exhaustion attacks).

Unlike the broad use of AI in security (AI for security), AI cybersecurity focuses on securing AI itself. It complements AI-enabled detection (using ML to find threats) by actually defending the ML systems from novel attacks. In other words, security for AI vs security with AI.

Why AI Systems Introduce a New Attack Surface

AI systems dramatically expand the attack surface in ways that cloud servers do not. For example:

· Models as Assets: AI model files (weights/checkpoints) become valuable assets. Attackers may try to steal model IP via model extraction attacks, systematically querying the model to reconstruct its logic. This is akin to stealing an algorithm or trade secret. Protecting the model itself (e.g. watermarking, strict API access controls, encrypted inference) is now paramount.

· Training Data as High-Value Targets: The training data used to build models is often sensitive or proprietary (think customer chats, medical records, or company code). Attackers may attempt data poisoning deliberately inserting bad samples or biases into the training set to subtly corrupt the model. They might also try to infer or exfiltrate raw data from the trained model (model inversion). This shifts attention to data integrity and provenance in a way cloud IT rarely considers.

· AI-Powered Decisions: AI systems often make or inform critical decisions (e.g. loan approvals, medical diagnoses, autonomous control). Tampering with an AI can directly harm real-world outcomes. For example, a model fooled into approving fraudulent loans or misdiagnosing patients has a deeper impact than, say, a defaced web page. Attackers can weaponize AI’s outputs, from synthesizing convincing deepfakes to tricking a self-driving car’s vision system with adversarial patches

These factors mean that AI cybersecurity extends beyond bricks-and-mortar infrastructure. It treats AI models and data as first-class assets requiring specialized protection, and it considers attackers who might use sophisticated AI-driven tactics themselves. As one security expert puts it, existing tools create “blind spots” for AI workloads because they were not built to handle non-deterministic, learning-based systems.

Why AI Security Is Fundamentally Different from Cloud Security

The unique nature of AI workloads makes AI security fundamentally different from cloud security in several key ways:

1. AI Models Are Assets, Not Just Applications

A trained AI model (a set of weights and parameters) is not just software code; it embodies valuable knowledge. For example, models can be billions of parameters strong and costly to train. Attackers may perform model extraction to clone this IP. Unlike a typical web app, there’s no concept of simply “patching” an AI model; the model itself must be guarded. Moreover, adversarial attacks (small crafted inputs that cause misclassification) are unique to ML models. Standard application security doesn’t address “imperceptible” noise altering a neural network’s output. In short, AI assets require model-specific protections, things like watermarking, gradient masking, and robust model architectures beyond traditional code security.

2. Training Data Integrity Is Critical

AI depends on data. If that data is corrupted, the model’s integrity falters. A poisoned dataset can introduce backdoors or biases that only become apparent at inference time. Techniques like differential privacy and robust data validation are essential to ensure the training set is trustworthy. By contrast, cloud security typically focuses on protecting data confidentiality (through encryption) rather than ensuring data correctness at a fine-grained level. In AI, even well-hidden tampering matters greatly. Regulators are now requiring documentation of training data sources (e.g. NIST’s AI 600-1 profile) and awareness of poisoning attacks, highlighting how data integrity is a primary security challenge for AI.

3. Adversarial AI Attacks Are Unique

Attack vectors like adversarial examples, prompt injection, and model inversion have no cloud analogs. In adversarial attacks, attackers craft inputs (images, text, etc.) that “fool” an AI into misbehaving. For instance, tiny perturbations to a stop sign can make an AI car vision system misread it. Prompt injections exploit conversational AI by injecting malicious instructions into user input. Model inversion attacks attempt to reconstruct sensitive training data just by querying the model. These tactics target the logic of the model itself. Defending against them requires AI-specialized techniques (robust training, input sanitization, output monitoring). Traditional cloud defenses like firewalls or standard IPS have no way to see or prevent these nuanced attacks on model inference.

4. AI Systems Continuously Learn and Drift

Many AI systems adapt over time. Models may be retrained on new data or have dynamic parameters (especially in online learning). This means their behavior can drift, intentionally or unintentionally. Security teams must continuously validate AI outputs and watch for anomalies. Explainable AI (XAI) tools can help analysts understand why a model made a decision, flagging if something seems off. Cloud workloads, in contrast, are generally static and predictable: once a server is patched, its behavior is fixed until the next update. Cloud security focuses on static configuration drift, not behavioral drift. With AI, behavioral monitoring becomes as important as infrastructure monitoring.

5. AI Has a Complex Supply Chain

Modern AI solutions pull together a wide supply chain: open-source model checkpoints, pretrained embeddings, third-party datasets, and containerized services. Each component can introduce vulnerabilities or biases. Security must consider the provenance of every piece (akin to an AI-specific SBOM). We must secure MLSecOps (the practice of securing MLOps pipelines). For instance, vulnerabilities in a shared Python library or a poisoned open-source model could compromise your AI system. In the cloud world, you worry about unpatched OS packages; in AI, you worry about poisoned model weights or malicious data providers.

In summary, AI security shifts the focus from just infrastructure to intelligence. Models, data, pipelines and AI decision logic become first-class security concerns. This marks a radical departure from cloud security’s assumptions, necessitating new tools and mindsets.

How Traditional Cloud Security Falls Short for AI Workloads

Because AI workloads have these unique characteristics, traditional cloud security controls are insufficient in several ways:

- Multitenancy Risks in GPU Environments: AI often requires GPU clusters. Cloud GPU instances may be co-located with others (multitenant), opening side-channel or cross-VM leak risks. Classic cloud models (like hypervisor isolation) weren’t built for contiguous memory patterns of neural nets. For example, a malicious tenant might attempt to snoop on GPU memory to infer model parameters. Current cloud security doesn’t specifically monitor GPU-sharing, so new isolation strategies (like GPU sandboxing or dedicated hardware) may be needed.

- Lack of Model-Level Monitoring: Cloud monitoring tools track CPU, memory, and network metrics. They lack visibility into a model’s internal state or performance. If an AI model starts producing biased or unexpected outputs, a cloud SIEM won’t catch that. We need model-specific metrics (prediction distributions, feature importance shifts, confidence scores) and anomaly detection tailored to ML, which traditional cloud security tools do not provide.

- Inference Endpoint Exploitation: AI services expose inference endpoints (APIs, chat interfaces, etc.) that accept rich inputs (text, images, audio). Attackers can craft malicious inputs to exploit these (e.g. embedded malware in images, or prompt injections). Generic API gateways or WAFs might block known exploits, but they don’t understand how to neutralize a malicious prompt that tricks an LLM. In practice, companies have seen attackers use LLMs themselves to automate probing of cloud APIs for vulnerabilities, requiring AI-specific filtering and rate-limiting.

- Limited AI-Specific Threat Modeling: Security teams often model threats at the network, host, or identity level. But for AI they need to consider new threat scenarios: what if a model is stolen? What if a chat window is hijacked to disseminate misinformation? If teams don’t include these in their threat modeling, they remain blind to critical vulnerabilities.

- Compliance Gaps in AI Governance: Most cloud compliance checklists cover encryption, audit logs, and identity. They rarely cover AI ethics or transparency. Emerging regulations (like the EU AI Act) and frameworks (NIST AI RMF, OWASP for LLMs) introduce requirements unheard-of in cloud compliance. For example, NIST AI 600-1 demands detailed documentation of training data sources, and the OWASP Top 10 for LLMs highlights things like data poisoning and prompt injection. A CSPM (Cloud Security Posture Management) scan won’t catch missing bias-impact analysis or undocumented model lineage. Thus, purely relying on cloud audit controls leaves enterprises exposed to regulatory and reputational risk.

In essence, cloud security tooling will continue to protect the physical and virtual infrastructure around AI, but it provides only part of the picture. Organizations need AI-aware defenses to cover what cloud tools inherently miss.

AI-Specific Threats Enterprises Must Address

Enterprises deploying AI must explicitly address threats unique to AI:

- Data Poisoning: Attackers manipulate training data so the model learns false associations or backdoors. For instance, subtly labeling malicious inputs as benign so that at inference the model misclassifies dangerous inputs as safe. Poisoning can happen during crowdsourced data collection or through compromised data pipelines. Defenses include data validation, provenance tracking, and differential privacy.

- Model Extraction: An adversary queries an AI model (often via its API) many times to reverse-engineer it. By analyzing inputs and outputs, the attacker can reconstruct a copy of the model, stealing intellectual property and bypassing usage restrictions. Rate limiting, output perturbation (adding noise), and monitoring unusual query patterns are typical countermeasures.

- Model Inversion and Membership Inference: These attacks aim to recover details of the training data from a model. For example, by probing a face recognition model’s outputs, an attacker might reconstruct images of individuals it was trained on. This violates privacy. Defenses include limiting the output information (no confidence scores), differential privacy in training, and strict access controls.

- Prompt Injection (in LLMs): Attackers feed malicious prompts to a language model to override or manipulate its behavior. For instance, they might trick a chatbot into revealing confidential system prompts or performing harmful actions by embedding commands in seemingly innocent input. This is analogous to SQL injection for LLMs. Mitigations involve sanitizing inputs, using system-level instructions that can’t be overridden, and rigorous adversarial testing.

- Adversarial Examples (Evasion): In vision or other perceptual AI, tiny perturbations imperceptible to humans can cause wildly incorrect outputs. One classic example is adding stickers to a stop sign so a self-driving car ignores it. Such attacks require robust model training (e.g. adversarial training) and input-validation defenses.

- Federated Learning Security Risks: In federated learning, multiple parties collaboratively train a model without sharing raw data. This setup opens new risks: a malicious participant can inject poisoned updates or data (Byzantine attacks) to corrupt the global model. The server aggregating updates is also a target. Defenses include secure aggregation techniques, anomaly detection on client updates, and Byzantine-resilient algorithms.

Each of these AI-specific threat categories demands dedicated mitigation strategies that simply do not exist in a baseline cloud security toolset.

Building a Robust AI Security Posture

To defend AI systems effectively, enterprises should adopt a multi-faceted approach combining frameworks, tools, and processes:

- Implement AI Risk Management Frameworks: Organizations can leverage emerging standards like NIST’s AI Risk Management Framework (AI RMF). The NIST AI RMF (released in 2023) provides voluntary guidance for managing AI trustworthiness, from design to deployment. It emphasizes roles for confidentiality, integrity, and explainability. Additionally, projects like OWASP’s GenAI Security Top 10 list (for LLMs) highlight common vulnerabilities (prompt injection, data poisoning, etc.) that teams should explicitly check. The MITRE ATLAS framework (Adversarial Threat Landscape for AI) can be used to understand real-world AI attack patterns and inform threat models.

- Secure the AI Lifecycle: Embed security at every stage of the MLOps pipeline – from data collection and preprocessing, through model training, to deployment and post-deployment monitoring. This can include:

· Development: Use code scanning on AI code and infrastructure-as-code, vet open-source libraries (like PyTorch or TensorFlow) for vulnerabilities, enforce secure coding standards.

· Training: Isolate training environments, encrypt datasets, perform data lineage tracking, and conduct adversarial testing (e.g. simulate poisoning or evasion attacks on the model).

· Deployment: Hardening inference servers, enabling strict API authentication, and containerizing models with minimal privileges. Encrypt model files at rest and in transit.

- Monitoring: Continuously observe model inputs/outputs. Use anomaly detection to flag drifts or unexpected behavior. Apply explainable AI tools so that security teams can audit why certain decisions were made. Continuously retrain or rollback a model if significant drift or bias is detected.

- AI Governance and Compliance: Establish policies around AI use. Map out the data flow and model flow end-to-end (an “AI data flow map”) and assign ownership of each component. Ensure compliance with regulations like GDPR (for privacy of training data) and forthcoming laws (EU AI Act, state AI policies). Document AI workflows (who has access to the model, how decisions are made) to demonstrate auditability. Train staff in AI ethics and security best practices. A formal governance process (often involving an AI ethics board or council) helps enforce consistency and oversight.

- Threat Modeling for AI Systems: Go beyond generic threat models. Identify AI assets (datasets, models, inference APIs) and map potential attack paths between them. For each asset, list realistic threat scenarios: What if an insider tampers with the data lake? What if a supply-chain compromise injects a backdoored model? Determine safeguards (e.g. input validation, multi-party approval for model retraining). Red-team exercises should include AI-specific scenarios (like performing a prompt injection attack on the demo chatbot or trying to infiltrate training pipelines). Tools like attack trees or the MITRE ATLAS matrix can structure this analysis.

By integrating these measures, an enterprise can achieve a strong AI security posture — one that treats AI models and data as high-value digital assets and monitors them constantly, rather than assuming they are automatically protected by underlying cloud security.

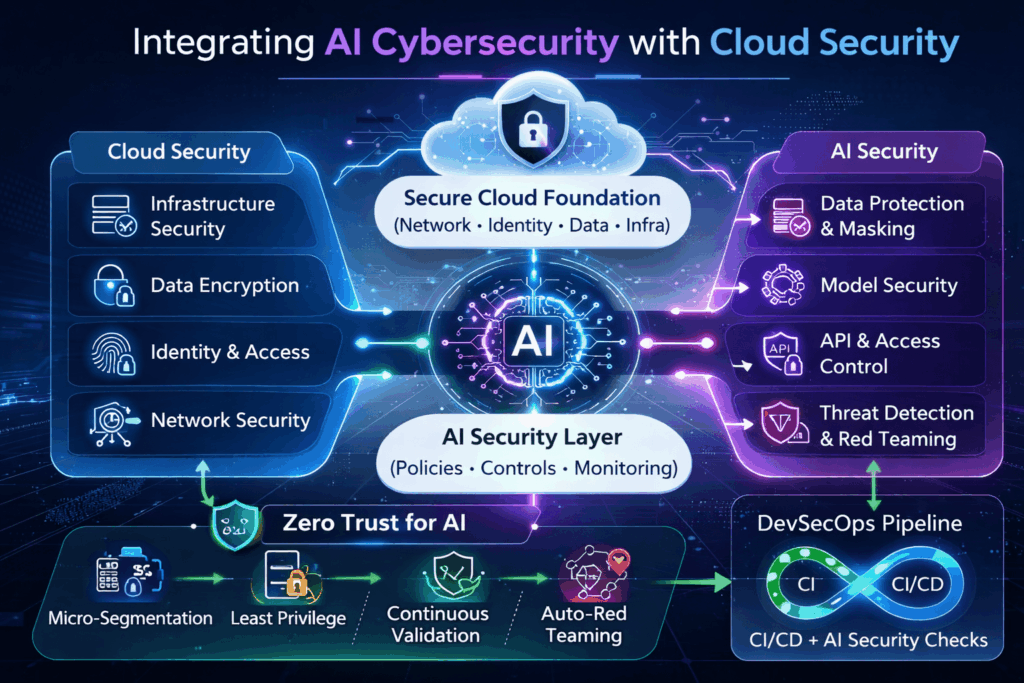

Integrating AI Cybersecurity with Cloud Security

While AI security is distinct, it should complement, not replace, cloud security. The two domains can reinforce each other:

- Complementary Roles: Cloud security continues to provide a hardened, monitored infrastructure (networks, VMs, identity). AI security operates on top of that: it secures the “last mile” of intelligence. For example, encrypting data at rest (cloud control) is necessary but not sufficient — we also must encrypt training data copies and manage data masking within the AI platform.

- Zero Trust for AI Systems: Apply Zero Trust principles to AI. Just as Zero Trust treats every user or device as untrusted until verified, do the same for AI components. Segregate AI workloads into micro-segments, authenticate every call to an AI API, and enforce least privilege on model runtimes. For instance, an AI agent that accesses financial data should have only read access to necessary tables, and every model update should be cryptographically signed before deployment. This ensures that even if an attacker penetrates the cloud network, they can’t hijack the AI model without authorization.

- AI Threat Protection with Automation: Leverage AI to help defend AI. Many security platforms now embed AI for anomaly detection or IOC correlation. For example, endpoint agents may use ML to flag suspicious fileless malware, which secures the infrastructure that AI runs on. Conversely, specialized AI defenses (like an AI firewall for LLM prompts) can automatically sanitize inputs before they reach the model. Continuous automated red-teaming (generative AI that tries attack scenarios) can proactively find gaps. The goal is continuous validation: AI systems demand constant vigilance and rapid response, which only automation can deliver at scale.

- Continuous Validation and Red Teaming: Just as cloud security encourages regular pentests, AI security needs its own red teaming. This means routinely testing models with adversarial inputs and probes, as well as auditing data pipelines for tampering. Tools like synthetic data generators, adversarial example frameworks, and open-source evaluation suites can constantly stress-test your AI. Align this with DevSecOps: only deploy models that have passed rigorous AI-focused security checks.

In practice, we see the industry moving toward unified platforms that handle both cloud and AI security. For example, Cloud-native AI security platforms now exist that run distributed AI models on endpoints and cloud alike, continuously enforcing policies across environments. These aim to remove disjointness, you secure cloud misconfigurations with the same platform that identifies a rogue AI prompt.

Ultimately, blending cloud and AI security means acknowledging that securing modern workloads is a layered problem. Infrastructure security lays the foundation, but AI security builds the intelligence safeguards on top.

How Cygeniq Secures Enterprise AI

Cygeniq’s unified AI security platform exemplifies how to protect AI from these specialized threats. Key capabilities include:

- Model-Level Protection and Adversarial Defense: Cygeniq’s HexaShield AI continuously tests and protects AI models. It applies adversarial attacks against your models in real-time to detect vulnerabilities, and monitors the models, datasets, prompts, and agent APIs for misuse. In effect, HexaShield treats the model as a guarded asset: it enforces strict access controls, performs dynamic robustness assessments, and can quarantine or roll back a model if tampering is detected.

- End-to-End AI Governance: The GRCortex AI module delivers comprehensive AI governance. It enforces policies across the AI lifecycle, maintains a complete inventory of models and data, and generates audit-ready reports. GRCortex aligns to global AI regulations and frameworks, providing the documentation and visibility needed for compliance. For example, it tracks who has trained which model on what data, enforces bias mitigations, and ensures privacy requirements are met before a model goes to production.

- AI Security Posture Management: Cygeniq’s platform unifies posture management across on-prem, cloud, and hybrid AI environments. It continuously monitors all AI assets (models, pipelines, agents) for emerging threats. Using an AI-driven SOC layer (CyberTiX AI), it correlates threat signals in context, if a cloud workload anomaly coincides with a model drift event, for example, it triggers an automated investigation. This gives enterprises 24/7 autonomous threat detection tailored to AI workloads.

- Regulatory-Compliant AI Environments: Cygeniq embeds compliance into AI operations. Its governance engines map to regulations like GDPR, the EU AI Act, and NIST AI RMF. For instance, if a new generative AI regulation requires documenting data lineage, Cygeniq can automatically generate the evidence from its secure AI BOM. The platform ensures that as regulations evolve, enterprises can update policies centrally rather than bolt on patchwork solutions.

By integrating these capabilities into one platform, Cygeniq helps organizations treat AI as the high-value asset it is, securing it just like any critical business asset, but with AI-specific defenses.

Real-World Example: What Happens When AI Isn’t Properly Secured

Consider a cautionary scenario: data leakage via AI tooling. In March 2023, OpenAI inadvertently exposed millions of ChatGPT user conversation logs. The cause was a third-party library vulnerability: an insecure version of Redis (used for caching) allowed user queries to be retrieved by attackers. As a result, sensitive user prompts (which could contain proprietary business data or personal information) were leaked to other users. This incident highlights several lessons:

- Supply-Chain Vulnerability: A single vulnerability in an open-source component (Redis) in the AI stack caused the breach. It underscores the need to harden not just your own code, but all components in the AI supply chain.

- Data Exposure: Because ChatGPT’s training and conversation data are the core of its service, exposing them directly compromised user privacy and trust. Repercussions included regulatory scrutiny and user backlash.

- Rapid Spread: Millions of users were affected in hours, showing how fast AI-driven services propagate. Traditional breaches (like a compromised server) might remain isolated to an internal network; here, the breach replicated across a global user base.

Now imagine a targeted attack with greater motive: an adversary poisons the training data of a deployed medical AI. The model learns a subtle bias (e.g. misdiagnosing a condition in certain demographics). By the time the bias is noticed, patients could be harmed and the hospital faces lawsuits and fines. Or consider a fraudulent actor extracting a financial firm’s trading algorithm by repeatedly querying a prediction API, then replicating it to compete against the firm. These real and hypothetical incidents show the business impact: IP theft, regulatory fines, brand damage, and even physical harm.

The takeaway: Without AI-specific security measures, powerful AI capabilities can become catastrophic liabilities. Protecting AI is not optional, it’s essential.

Conclusion: AI Requires a New Security Mindset

AI is reshaping technology at machine speed, and security must keep pace. In today’s landscape, infrastructure security alone is insufficient. AI models, data pipelines, and decision logic are high-value digital assets that attackers will aggressively target.

Enterprises must expand their security paradigm: adopting AI-focused threat modeling, continual model monitoring, and AI-aware compliance. They must trust, but verify, every AI action. As one CTO quipped, “cybersecurity for AI must treat models like crown jewels, not just bits to protect, but brains to defend.”

Bold action is needed now. Assess your AI risk, embed security in every AI project, and invest in solutions purpose-built for AI threats. Because once malicious actors figure out how to leverage or hack your AI, the consequences could be far more damaging than any traditional breach.

Secure your AI systems with the same rigor as any mission-critical asset – before attackers turn your own intelligence against you.

Frequently Asked Questions (FAQ)

What is AI cybersecurity?

AI cybersecurity (or AI security) means protecting AI systems end-to-end. It covers securing the machine learning pipeline (data, models, code, and outputs) against threats. This includes defending against model theft (IP loss), data poisoning, adversarial inputs, and ensuring AI decisions are trustworthy and compliant. In short, it’s applying cyber defenses specifically to the unique components of AI.

How is AI security different from cloud security?

Cloud security focuses on infrastructure (servers, networks, storage) and typical IT threats (malware, misconfig, insider hacks). AI security, by contrast, focuses on models, data, and inference. For example, cloud tools secure your database, while AI security ensures the AI model built on that data isn’t poisoned or stolen. Cloud security assumes workloads are static; AI security deals with dynamic, learning-based systems. Thus, AI security adds layers like adversarial defense, model encryption, and data integrity checks that classic cloud tools lack.

What are the biggest risks in securing artificial intelligence?

The top AI-specific risks include: data poisoning (tampering with training data to subvert the model), model theft (extracting or copying proprietary models), adversarial inputs (crafting inputs that cause wrong outputs), prompt injection (manipulating LLM responses), and model inversion (reconstructing sensitive training data from the model). Federated learning also introduces risks where malicious participants can corrupt the collaboratively trained model. These go beyond normal IT threats and require new defenses.

Can traditional cloud security tools protect AI models?

Traditional tools (firewalls, CSPM, WAFs, etc.) help secure the cloud around AI systems (networks, VMs, data at rest). But they cannot secure the AI model’s logic or data pipeline on their own. They won’t detect an attacker probing an AI API to steal the model, nor spot if a dataset has been poisoned. In other words, cloud tools leave an “AI security gap” – security teams admit that “existing monitoring wasn’t designed for AI models”. To protect AI models, you need AI-specific solutions (like model firewalls, training data validators, continuous model testing).

What is the role of MLOps security in AI protection?

MLOps security (sometimes called ML security or MLDevSecOps) integrates security into the machine learning development lifecycle. Its role is to ensure that at each stage (data collection, preprocessing, training, validation, deployment, monitoring) there are controls to catch security issues. For example, an MLOps pipeline might include automated scans of incoming data for anomalies, checks for adversarial robustness during training, and sandboxed testing of models before release. The goal is to “secure by design”, building AI with security in mind from day one. Just as DevSecOps secures software builds, MLOps security secures AI workflows.

Why is AI governance important for compliance?

AI governance provides the policies, oversight, and documentation needed for regulated AI use. New laws (like the EU AI Act) and guidelines (NIST AI RMF) require things like bias mitigation, transparency, and data lineage in AI systems. Good governance ensures an organization meets these standards. It sets who can train or deploy models, how ethics reviews are done, and how audits are prepared. Without governance, companies risk fines and loss of trust. In other words, AI governance turns ad-hoc AI projects into controlled, auditable processes – which is crucial for compliance and accountability.

Feb 24,2026

Feb 24,2026 By prasenjit.saha

By prasenjit.saha