AI Risk Management in Retail Enterprises: Strategies and 8 Best Practices

In today’s retail industry, AI powers everything from demand forecasting to personalized marketing. While these technologies promise efficiency and new insights, they also introduce critical risks, biased algorithms, privacy breaches, fraud, and compliance issues. Forward-looking retailers prioritize AI risk management in retail as a core practice. By building robust governance and controls around their AI systems, companies can protect customer trust and unlock AI’s benefits.

As IBM notes, AI risk management is “the process of systematically identifying, mitigating, and addressing the potential risks associated with AI technologies,” aiming to minimize downsides while maximizing benefits. For example, one report found 61% of retailers now require in-depth training on AI ethics and guardrails, reflecting a surge in trust, 83% of retail leaders are confident in their AI outputs. This guide dives deep into the key risks of AI in retail and offers actionable strategies, from governance frameworks to hands-on controls, that enterprises must adopt to stay safe and compliant.

Why AI Risk Management Matters for Retailers

AI has rapidly become integral to retail operations, automating inventory management, personalizing customer experiences, and predicting sales trends. According to KPMG, 71% of retail leaders expect significant returns on AI investments. At the same time, new vulnerabilities emerge whenever AI touches customer data or decision-making processes.

Retailers have already seen real-world examples of AI gone wrong: biased facial recognition systems falsely flagging shoppers as suspects, or recruitment algorithms favoring one gender over another. The stakes are high: unaddressed AI risks can lead to data leaks, consumer distrust, regulatory penalties, or even business fraud.

For instance, a recent legal briefing warns that nearly 30% of retail fraud attempts are now AI-generated, from deepfake refund scams to fake storefronts. In this environment, AI risk management isn’t just a checkbox – it’s essential to safeguard revenue, reputation, and customers. It builds trust: when customers see transparent AI practices and data security, they feel confident interacting with smart shopping tools. It also ensures compliance: evolving laws like the EU AI Act and US guidelines are already treating high-risk AI (e.g. biometric or decision-making tools) with strict rules. In short, managing AI risk means protecting customers and the business while fully leveraging AI’s potential.

Key AI Risks in Retail

Retailers face a unique mix of AI-related threats that span technical, ethical and operational domains. Major risks include:

• Data Privacy and Security

AI relies on vast customer and transaction datasets, which can be vulnerable. An AI model’s training data might be exposed to breaches or hacking. Even without an external attack, misuse of personal data by a flawed AI system can violate GDPR or CCPA. Protecting data integrity and confidentiality throughout the AI lifecycle, from data collection to model updates, is critical. For example, tampered inventory data could lead to faulty demand forecasts and lost sales.

• Algorithmic Bias and Fairness

If historical data contains biases, AI can inadvertently perpetuate discrimination. A “next-best-offer” engine might favor certain demographics, or a credit-approval AI might learn prejudiced patterns. The result can be regulatory fines or reputational damage. Industry experts stress inclusive data practices and bias auditing: diverse training datasets, fairness metrics, and human oversight are essential to catch skewed outcomes.

• Fraud and Security Threats

Retail is a top target for AI-enabled scams. Criminals now deploy deepfake voice bots, counterfeit invoices, and fake social media profiles to defraud stores. AI tools can even generate synthetic product images to boost bogus refund claims. Traditional fraud detection must be augmented with AI-aware controls, for instance, to flag anomalous patterns that suggest deepfakes or multiple accounts. Ongoing monitoring of transactions and prompt flagging (as Securitas notes) “can significantly strengthen fraud prevention measures”.

• Operational and Integration Risks

AI models degrade over time (model drift) or malfunction due to software bugs. If a pricing algorithm fails, it could oversell at a loss. If a chatbot is hijacked by malicious prompts, it might churn out misleading info. Every AI deployment introduces points of failure. Retailers need continuous model testing and fallback plans. As IBM points out, 96% of leaders worry generative AI increases breach risk, yet only 24% of projects were secured in one study. Proactive controls (e.g. adversarial testing, access restrictions, incident response plans) are needed.

• Regulatory and Compliance Risks

New laws and consumer protections are emerging. The EU’s AI Act categorizes systems by risk and bans unacceptable uses. In the US, agencies are eyeing AI-driven ads and consumer content – one executive order even mandates labeling of AI-generated content. The FTC has made clear it will penalize “negligent or irresponsible” AI use. Retailers must continuously update their AI governance to align with these evolving rules and avoid fines.

• Reputational Risks

Beyond formal regulations, negative media or activist campaigns can hit retailers. If AI recommendations echo hate speech, or if a predictive system appears to profile customers unethically, public trust can erode overnight. Ensuring transparency (e.g., explaining when AI is used) and aligning with ethical values are as much PR issues as technical ones.

In short, AI in retail can improve performance, but it also opens diverse risk vectors. A structured risk management approach helps address them systematically.

Strategies for AI Risk Management in Retail

Given these challenges, retailers need a proactive risk framework. Industry advisors recommend a mix of policy, process and technology controls. The following strategies (adapted from best practices) can guide a retail AI program:

• Education & Training

Make sure all stakeholders, from executives to store staff, understand AI basics and risks. Training programs should cover how AI tools work, what data they use, and how to spot issues. Clear communication (“why we use AI and what its limits are”) builds an informed culture. According to experts, educating staff on AI “fundamentals, risks, and benefits” through transparent dialogue is the first step.

• Governance & Policies

Align AI projects with existing corporate policies. Establish an AI governance committee or designate risk officers. Create written standards for AI development and deployment, covering data handling, model validation, incident response, and ethics. For example, set approval processes before new AI tools go live. Retail frameworks like the NRF guidelines emphasize internal oversight and vendor accountability: retailers should require AI vendors to share governance practices and integrate AI controls into contracts. A strong governance structure ensures clear ownership – everyone from marketing to IT knows which policies to follow.

• Comprehensive Risk Assessments

Conduct formal AI risk assessments at every stage. Before launching an AI solution, map out potential harms: What data is used? Who could be affected? (Employees, customers, partners?) What happens if the model fails or is exploited? As one AI blueprint suggests, perform risk assessments “after solution ideation” to identify use cases, harms and mitigations. Repeat these assessments periodically to account for changes (data drift, new features). Integrating real-time monitoring (for anomalies in AI outputs) is also vital.

• Data Security & Privacy

Protect the underlying data rigorously. Implement strong encryption, access controls, and anonymization where possible. For instance, separate personally identifiable information from AI datasets. Given that “AI systems often handle sensitive personal data,” robust security (both technical and procedural) is needed. Regularly audit data pipelines to ensure customer data isn’t inadvertently exposed. Also, build privacy-by-design: don’t collect data you don’t need, and comply with regulations like GDPR/CCPA in how AI uses customer data.

• Bias Mitigation & Ethical AI

Actively audit models for bias. This involves testing AI outputs across different demographics and scenarios. Use explainable AI tools if possible, so decision logic isn’t a black box. Promote diversity in your data sets and development teams to reduce skew. As retailers have been advised, ensure diverse representation in data and governance to prevent discriminatory outcomes. Communicate policies to customers – e.g., tell them how you prevent discrimination in your AI systems to build confidence.

• Vendor and Third-Party Oversight

Many retailers use cloud AI services or third-party algorithms. Treat these as extensions of your risk surface. Include AI governance clauses in vendor contracts: require transparency about their model training, data usage, and security practices. Integrate AI vendor risk into your procurement process. For example, before adopting a new AI tool, verify it meets your internal AI standards (fairness, security, explainability). Regular third-party audits or questionnaires can help ensure outside models follow good practices.

• Customer Trust & Transparency

Finally, consider the customer perspective. Be clear when AI is involved (e.g., labelling AI-generated content). Provide channels for customers to ask questions about how their data is used or how recommendations are made. Smooth out the customer experience: coordinate between teams so that any AI-driven feature (chatbot, personalization, checkout) feels consistent and secure. Clarkston Consulting advises integrating risk procedures “with business partners supporting your AI tools” to ensure a smooth, transparent experience. Transparent AI builds loyalty: customers are more forgiving of mistakes when they trust your intentions.

Implementing all these requires tailored planning. The mix will vary by retailer size, technology maturity and customer base. For example, a national chain with diverse demographics might emphasize ethical audits and data privacy, while an online retailer might focus on fraud prevention and model monitoring.

AI Governance Frameworks and Compliance

No retailer can navigate AI risks in isolation. Industry standards and regulations provide proven frameworks and expectations. Some of the most important references are:

• NIST AI Risk Management Framework (AI RMF)

Originally from the National Institute of Standards and Technology, this voluntary framework is widely cited as a best practice for any organization using AI. It guides companies to define AI “goals and objectives,” identify risks, implement controls and monitor outcomes in a continuous loop. As one guide notes, NIST’s framework helps firms “identify, assess, and manage risks associated with AI” in a structured way. Retailers can use it to benchmark their policies and fill gaps.

• ISO/IEC 42001 – Management Systems for AI

This new international standard (published in 2022) provides an auditable AI management system. It covers governance, risk management, and ethical considerations. Achieving ISO 42001 certification (or aligning with it) can reassure regulators and customers that your AI practices are mature.

• EU AI Act and Global Regulations

The EU AI Act (phased implementation through 2026) categorizes AI applications by risk. High-risk systems (like biometric scanners or loan approval AIs) face strict requirements (documentation, human oversight). Retailers selling in Europe must check if any deployed AI tools (e.g. in stores, staffing, marketing) fall under high risk. In the US, an executive order on AI and upcoming FTC guidance mean even more scrutiny on areas like false advertising and data misuse. Staying ahead of these rules (by, for example, labeling AI content or documenting model validations) turns compliance from a burden into a competitive edge.

• Industry Best Practices (e.g. NRF Principles)

The National Retail Federation has published AI guidelines specific to retail. These emphasize internal oversight (to prevent discriminatory outcomes in customer-facing AI) and third-party accountability. Joining industry groups or following consortium guidelines can also help you stay updated on emerging expectations.

By embedding these frameworks into your AI program, you ensure consistency and credibility. For example, set up regular compliance reviews: map each AI system to its regulatory category (using EU/US guidance), then apply appropriate controls. Document all steps for audit purposes. Many larger retailers are already moving this way – a KPMG survey found that retailers who integrate governance early face lower long-term risks.

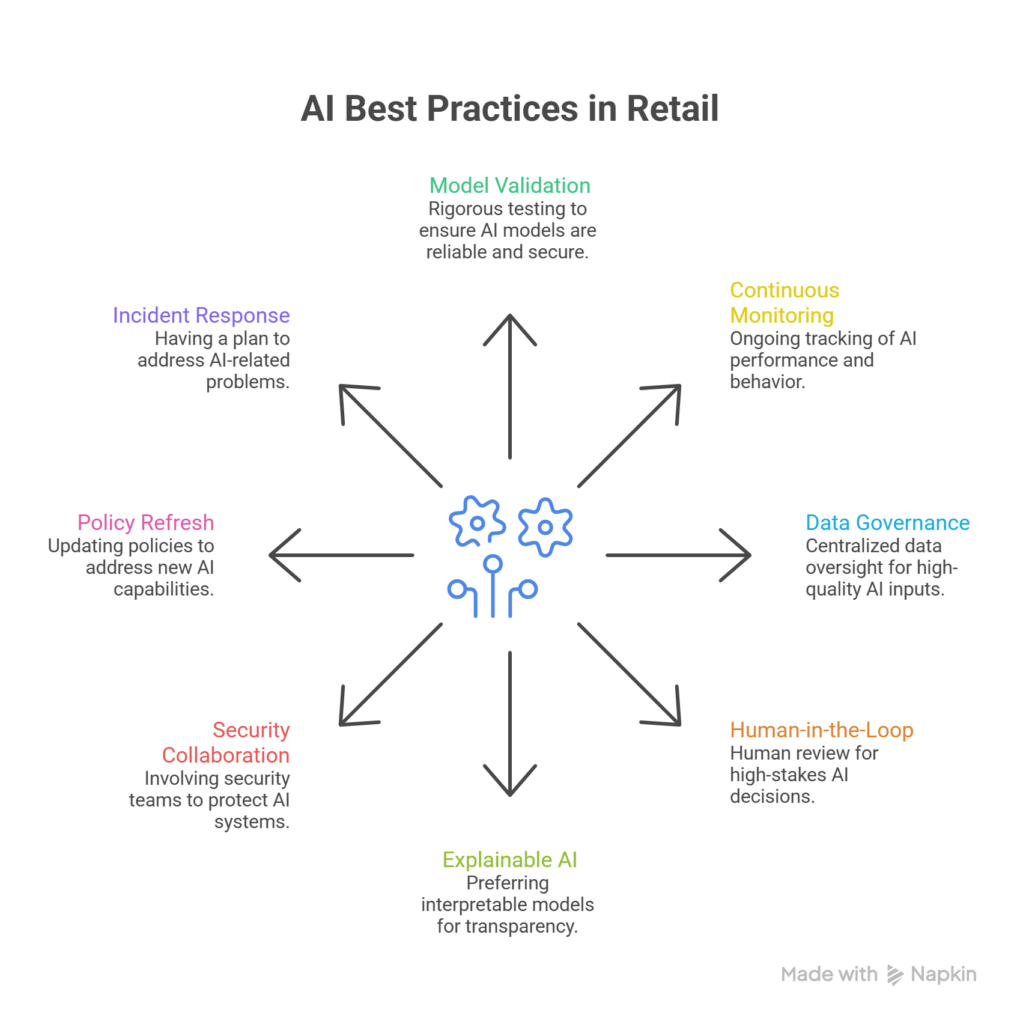

8 Best Practices and Tools

Building on the strategies above, here are some concrete practices and emerging tools that retail enterprises can leverage:

1. Model Validation & Testing

Before any AI model goes live, test it rigorously. Use synthetic data scenarios to probe for failures (e.g. adversarial testing to check security). Employ bias-detection software to flag unfair outcomes. Some retailers run “shadow modes” where an AI makes decisions behind the scenes without affecting customers, to compare outcomes with human processes first.

2. Continuous Monitoring

Once deployed, AI models should not be “set and forget.” Use dashboards and alerts to watch for unusual behavior – for example, a sudden spike in declined transactions might mean a fraud model is malfunctioning. Cloud providers and specialized vendors now offer AI monitoring platforms that track drift, performance metrics, and compliance logs.

3. Data Governance Framework

Centralize data oversight. Maintain a “single source of truth” for customer data if possible, so that AI systems draw from high-quality inputs. Track data lineage (where data came from and how it has been transformed) to aid transparency. Regularly audit data for bias or errors that could skew AI outputs.

4. Human-in-the-Loop (HITL)

For high-stakes decisions (like credit limits or fraud flags), ensure a human reviews or overrides AI suggestions. This can be automated (flag certain cases for review) or culture-driven (analysts double-check algorithms in real time).

5. Explainable AI (XAI)

Where feasible, prefer models that can be interpreted. For example, decision trees or explanation layers for neural nets help compliance teams explain to regulators how a decision was reached. Such transparency is crucial for both internal trust and external audits.

6. Collaboration with Security Teams

Treat AI like any other cybersecurity asset. Involve InfoSec teams to conduct penetration testing on AI APIs and to include AI systems in incident response plans. If a hacker attempts prompt injections or model poisoning, they must be detected quickly.

7. Regular Policy Refresh

As new AI capabilities (like generative models) emerge, update your policies. For instance, customer-facing chatbots may now need to handle deepfake input attempts – add guidance on verifying identity or rejecting suspicious requests.

8. Incident Response Plan

Have a clear action plan if an AI system causes a problem. This could include customer notification procedures, rollback mechanisms, and remediation steps. A quick, responsible response can preserve trust even if something goes wrong.

Secure Your AI Before It Becomes Your Biggest Risk.

We help enterprises secure AI systems

with real-time protection, testing, and governance.

✓ Easy to DEPLOY

✓ ENTERPRISE READY

Conclusion

AI is transforming retail operations, but with that power comes responsibility. Retail enterprises that proactively manage AI risk, through training, governance, assessments and the right technologies, will not only avoid pitfalls but also gain customer trust and a competitive advantage. The strategies and frameworks outlined above provide a roadmap: embed strong policies early, keep human oversight, and continuously adapt as AI evolves.

Frequently Asked Questions

What exactly is AI risk management in the context of retail?

AI risk management involves identifying and mitigating the potential pitfalls of using AI in retail – such as biased recommendations, data leaks, fraud exposure and regulatory non-compliance. It’s about building guardrails (policies, checks and tools) to ensure AI enhances business outcomes without harming customers or violating rules.

Why should a retail enterprise prioritize AI risk management?

Because the costs of unmanaged AI are high. A biased pricing algorithm can alienate customers or draw legal scrutiny, while an unchecked AI chatbot can leak sensitive info. Retailers operate on thin margins and tight competition; a single AI-related scandal can damage reputation and incur fines. Managing AI risk protects revenue and trust. Moreover, as surveys show, companies with mature AI risk practices feel more confident in their AI’s ROI.

What are some common AI risks specific to retail?

Typical risks include biased customer profiling, AI-enabled fraud (like deepfakes for fake returns), supply chain prediction failures, data breaches in AI databases, and privacy compliance failures. Operationally, AI models can degrade, and integration with existing systems can create vulnerabilities. Being aware of these retail-specific use cases helps focus the risk management plan.

What frameworks or standards should retailers use?

Key references include the NIST AI Risk Management Framework, ISO/IEC 42001 for AI management systems, and evolving laws like the EU AI Act. Retailers may also follow industry guidelines (NRF AI principles) and general data standards (PCI DSS for payments, SOC 2 for security). Aligning with these frameworks ensures your policies meet or exceed expectations.

How do I start implementing AI risk management in my retail organization?

Begin by auditing your current AI use cases: list all AI tools in use (from chatbots to pricing engines), and classify them by risk level. Next, form a cross-functional team (IT, legal, marketing) to create an AI governance policy. Include training for key staff and set up simple monitoring (logs, alerts). Gradually build out risk assessments for each AI system, and adopt one standard framework (like NIST) to guide you. It’s an iterative process – start small, and expand controls over time.

Apr 06,2026

Apr 06,2026 By prasenjit.saha

By prasenjit.saha